The Global Cooling Bet - Part 2

Last week we proposed a bet against the "pause in global warming" forecast in Nature by Keenlyside et al. and we promised to present our scientific case later - so here it is.

This is why we do not think that the forecast is robust:

Figure 4 from Keenlyside et al '08. The red line shows the observations (HadCRU3 data), the black line a standard IPCC-type scenario (driven by observed forcing up to the year 2000, and by the A1B emission scenario thereafter), and the green dots with bars show individual forecasts with initialised sea surface temperatures. All are given as 10-year averages.

1. Their figure 4 shows that a standard IPCC-type global warming scenario performs slightly better for global mean temperature for the past 50 years than their new method with initialised sea surface temperatures (see also the correlation numbers given at the top of the panel). That the standard warming scenario performs better is highly remarkable since it has no observed data included. The green curve, which presents a set of individual 10-year forecasts and is not a time series, each time starts again close to the observed climate, because it is initialised with observed sea surface temperatures. So by construction it cannot get too far away, in contrast to the "free" black scenario. Thus you'd expect the green forecasts to perform better than the black scenario. The fact that this is not the case shows that their initialisation technique does not improve the model forecast for global temperature.

2. Their 'cooling forecasts' have not passed a the test for their hindcast period. Global 10-year average temperatures have increased monotonously during the entire time they consider - see their red line. But the method seems to have produced already two false cooling forecasts: one for the decade centered on 1970, and one for the decade centered on 1999.

3. Their forecast was not only too cold for 1994-2004, but it also looks almost certain to be too cold for 2000-2010. For their forecast for 2000-2010 to be correct, all the remaining months of this period would have to be as cold as January 2008 - which was by far the coldest month in that decade thus far. It would thus require an extreme cooling for the next two-and-a-half years.

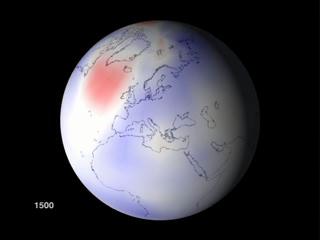

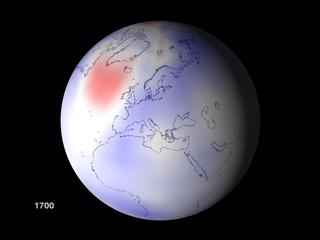

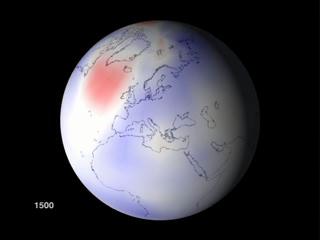

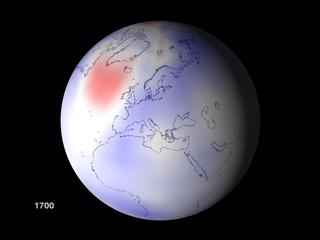

4. Even for European temperatures (their Fig. 3c, not part of our proposed bet), the forecast skill of their method is not impressive. Their method has predicted cooling several times since 1970, yet the European temperatures have increased monotonously since then. Remember the forecasts always start near the red line; almost every single prediction for Europe has turned out to be too cold compared to what actually happened. There therefore appears to be a systematic bias in the forecasts.

5. One of the key claims of the paper is that the method allows forecasting the behaviour of the meridional overturning circulation (MOC) in the Atlantic. We do not know what the MOC has actually been doing for lack of data, so the authors diagnose the state of the MOC from the sea surface temperatures - to put it simply: a warm northern Atlantic suggests strong MOC, a cool one suggests weak MOC (though it is of course a little more complex). Their method nudges the model's sea surface temperatures towards the observed ones before the forecast starts. But can this induce the correct MOC response? Suppose the model surface Atlantic is too cold, so this would suggest the MOC is too weak. The model surface temperatures are then nudged warmer. But if you do that, you are making surface waters more buoyant, which tends to weaken the MOC instead of enhancing it! So with this method it seems unlikely to us that one could get the MOC response right. We would be happy to see this tested in a 'perfect model' set up, where the SST-restoring was applied to try and get the model forecasts to match a previous simulation (where you know much more information). If it doesn't work for that case, it won't work in the real world.

6. When models are switched over from being driven by observed sea surface temperatures to freely calculating their own sea surface temperatures, they suffer from something called a "coupling shock". This is extremely hard, perhaps even impossible, to avoid as "perfect model" experiments have shown (e.g. Rahmstorf, Climate Dynamics 1995). This problem presents a formidable challenge for the type of forecast attempted by Keenlyside et al., where just such a "switching over" to free sea surface temperatures occurs at the start of the forecast. In response to the "coupling shock", a model typically goes through an oscillation of the meridional overturning circulation over the next decades, of the magnitude similar to that seen in the Keenlyside et al simulations. We suspect that this "coupling shock", which is not a realistic climate variability but a model artifact, could have played an important role in those simulations. One test would be the perfect model set up we mentioned above, or an analysis of the net radiation budget in the restored and free runs - a significant difference there could explain a lot.

7. To check how the Keenlyside et al. model performs for the MOC, we can look at their skill map in Fig. 1a. This shows blue areas in the Labrador Sea, Greenland-Iceland-Norwegian Sea and in the Gulf Stream region. These blue areas indicate "negative skill" - that means, their data assimilation method makes things worse rather than improving the forecast. These are the critical regions for the MOC, and it indicates that for either of the two reasons 5 and 6, their method is not able to correctly predict the MOC variations. Their method does show skill in some regions though - this is important and useful. However, it might be that this skill comes from the advection of surface temperature anomalies by the mean ocean circulation rather than from variations of the MOC. That would also be a an interesting issue to research in the future.

8. All climate models used by IPCC, publicly available in the CMIP3 model archive, include intrinsic variability of the MOC as well as tropical Pacific variability or the North Atlantic Oscillation. Some of them also include an estimate of solar variability in the forcing. So in principle, all of these models should show the kind of cooling found by Keenlyside et al. - except these models should show it at a random point in time, not at a specific time. The latter is the innovation sought after by this study. The problem is that the other models show that a cooling of one decadal mean to the next in a reasonable global warming scenario is extremely unlikely and almost never occurs - see yesterday's post. This suggests that the global cooling forecast by Keenlyside et al. is outside the range of natural variability found in climate models (and probably in the real world, too), and is perhaps an artifact of the initialisation method.

Our assessment could of course be wrong - we had to rely on the published material, while Keenlyside et al. have access to the full model data and have worked with it for months. But the nice thing about this forecast is that within a few years we will know the answer, because these are testable short term predictions which we are happy to see more of.

Why did we propose a bet on this forecast? Mainly because we were concerned by the global media coverage which made it appear as if a coming pause in global warming was almost a given fact, rather than an experimental forecast. This could backfire against the whole climate science community if the forecast turns out to be wrong. Even today, the fact that a few scientists predicted a global cooling in the 1970s is still used to undermine the credibility of climate science, even though at the time it was just a small minority of scientists making such claims and they never convinced many of their peers. If different groups of scientists have a public bet running on this, this will signal to the public that this forecast is not a widely supported consensus of the climate science community, in contrast to the IPCC reports (about which we are in complete agreement with Keenlyside and his colleagues). Some media reports even suggested that the IPCC scenarios were now superseded by this "improved" forecast.

Framing this in the form of a bet also helps to clarify what exactly was forecast and what data would falsify this forecast. This was not entirely clear to us just from the paper and it took us some correspondence with the authors to find out. It also allows the authors to say: wait, this is not how we meant the forecast, but we would bet on a modified forecast as follows… By the way, we are happy to negotiate what to bet about - we're not doing this to make money. We'd be happy to bet about, say, a donation to a project to preserve the rain forest, or retiring a hundred tons of CO2 from the European emissions trading market.

We thus hope that this discussion will help to clarify the issues, and we invite Keenlyside et al. to a guest post here (and at KlimaLounge) to give their view of the matter.

http://www.realclimate.org/